There’s this guy named Nigel Richards, a professional Scrabble player from New Zealand, whose brain is not like yours or mine. He wins world championships in languages he doesn’t speak, like Spanish and French. The way he strategizes is, I suppose, recognizable as human thought, but just barely. I mean watch:

Richards seems to have abstracted away the concept of a “word” entirely, transforming his brain into an engine designed to arrange tiles with differently-valued patterns on them, in sequences that lead to victory.

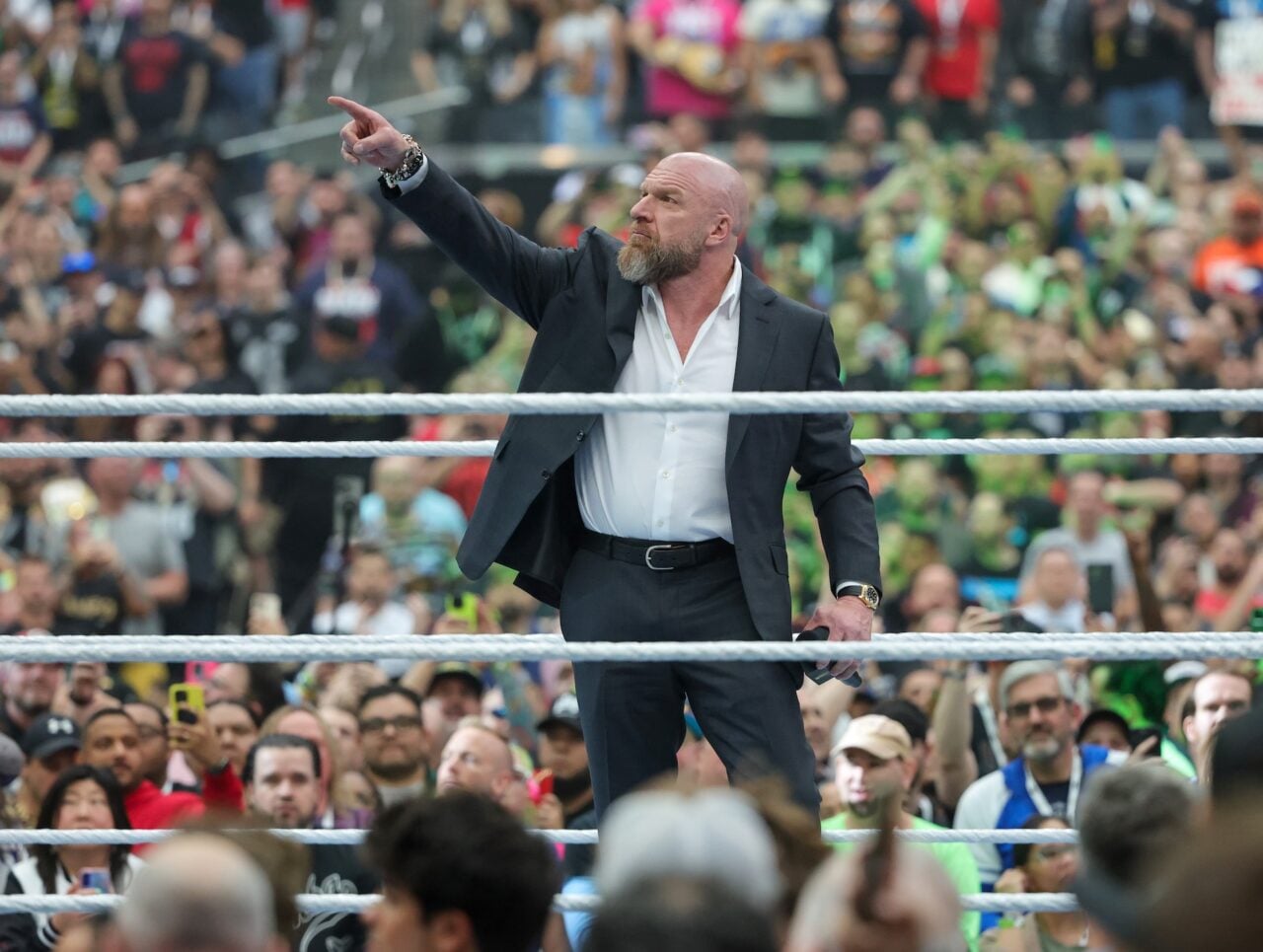

In an era in which the world’s largest entertainment company is YouTube, an algorithmic software ecosystem that delivers the user-generated content it knows you will like before you know what you will like, entertainment is like a Scrabble board. And I believe a series of WWE YouTube documentaries apparently made with glitchy AI tools may just be a form of primitive, digital Nigel Richards. Perhaps these systems can master the game of video discovery not as it’s meant to be understood, but purely for the exploits that lead to success.

The YouTube videos in question, apparently unearthed by a game designer and artist named Sam Blye, also known as ompuco, have gone viral on Bluesky over the past day or so.

found a whole operation of unmanned youtube channels making completely unchecked long form slop videos where the ai voice simulacra regularly trips up & does this for a full ten minutes every time.

all the very legit comments are like “NO! THATS NOT TRUE! YOU LIE HE DID NOT” & never acknowledge it.

— ompuco (@ompu.co) April 27, 2026 at 8:34 PM

They’re supposedly about WWE plot lines and real world drama, but from time to time the narrator seems to have some kind of transient ischemic attack that makes him say “what,” “whoa,” and “like” with bizarrely intensifying emphasis, as if he’s trying to stay upright on a comically long rug that’s being pulled out from under him. Then the “what” turns into grunts. Then the grunts give way to the sound of a person being strangled. Before long you’re just listening to the sound of a wet mouth. Sometimes this goes on for ten minutes or more, and then the voice over carries on like nothing happened.

This is apparently a pattern. Other users have noticed that other videos from this user have the same glitch, and judging from some, it might have something to do with the pronunciation of “WWE.”

But other YouTube accounts are posting similar videos with a similar glitch:

It’s fitting that Allex Wellerstein, a scholar of nuclear war scenarios, was able to read the writing on the wall here, posting on Bluesky, “Anyone who doesn’t want more of this is being LEFT BEHIND.”

So far, no one has reported exactly who is doing this, how the voice generator goes so catastrophically awry, and why the glitchy videos are still online. Occam’s razor suggests someone is spamming the YouTube algorithm, possibly on hijacked accounts, and just hoping to be picked up by viewers with autoplay on.

One of the accounts posting these videos used to post what looks like personal content in Turkish about 18 years ago, then went dormant. Then, about a month ago, it started posting WWE documentaries with lengths ranging from 20 minutes or so to an hour or longer (depending how long the strangulation noises drag on) at the rate of about one per day.

One perceptive YouTube commenter seemed to understand the danger of these videos, posting “make sure to delete this from your watch history y’all.” It’s hard not to notice that the glitchy videos are some of the most popular uploads from these creators. Curiosity clicks help a bit, but people like me who actually sit there and listen to the glitches for minutes at a time do two insidious things: demonstrate to the algorithm—and other YouTube users—which part is interesting, and also help build toward YouTube’s 4,000 watch-hour threshold for monetization.

Crucially, no human creator or gatekeeper has to pay attention to any of this. The uploader of these videos could be completely asleep at the wheel, not knowing or caring that the glitches are there, but benefiting from them anyway.

We’re still in the early days of slop making incursions into our media diets. The 2024-era case against AI being able to make art is pretty persuasive, but it may not matter. AI can, it appears, pop up and show humanity horrifyingly arresting junk, forms of content freed from the constraints of intention, that we’ll consume anyway.